This month I was fortunate enough to co-author a really interesting paper in Radiology entitled Chest Radiographs in Congestive Heart Failure: Visualizing Neural Network Learning 1. We described a novel use for GANs (more about these shortly) in helping to visualize disease predictions made by AI - and the results were quite literally revealing.

Like it or not, artificial intelligence has become a big deal in radiology of late, and while it is almost certainly over-hyped, it is likely that we’ll soon see some integration into clinical practice. In this post, I want to briefly describe our research, show some animated GIFs (always fun) and speculate on the future.

First, a little background on GANs…

What do the three above images have in common? You probably can't tell instantly, but the answer is that none of them are real. Each image was artificially created by a GAN, a Generative Adversarial Network 2,3. The x-ray, the bedroom, and the celebrity dude are all totally fake - although you could argue that every celebrity is fake, but that’s another issue.

GANs are a fascinating form of deep learning where two neural networks compete against each other (adversarially) to learn how to create fake data. The generator network is tasked with creating fake data (in our case fake chest x-rays) and the discriminator network is tasked with detecting fake data from amongst real data (detecting fake chest x-rays).

Initially, the generator is terrible at producing fake x-rays and the discriminator spots them all. But the generator learns from these rejections and over many cycles it gets better and better at making x-rays that appear realistic. Likewise, the discriminator gets better and better at spotting even subtle forgeries. Eventually, the generator learns how to create fake data that is indistinguishable from real data (within the limits of its architecture).

Unlike fake news, fake data is a good thing and can be really, really useful... tremendously useful. I know that seems counterintuitive at first (and at second and at third) but it is true. There are already hundreds of applications for GANs that have been described in the scientific literature, across many disparate fields. So far their use in radiology, however, has been relatively small.

Now on to our real fake research... and GIFs!

Our idea was to use the example of heart failure prediction to see if a chest x-ray GAN could help reveal the image features learned by a neural network. We basically asked, “okay AI, if you’re so confident that this chest has heart failure, show me what you would change on the x-ray to remove the disease?”. The expectation would be that a well-trained model would highlight traditional features of cardiac failure like cardiomegaly (arrowheads), pleural effusions (arrow) and airspace opacity (star) - which is exactly what it did.

The full technical details are in the paper and supplement 4, but the quick summary is that we used ~100,000 chest x-rays to create a generator capable of producing low-resolution fakes (128 x 128 pixels) from a latent space. We then encoded ~7,000 real chest x-rays into the latent space, trained a smaller neural network to predict heart failure (BNP levels) on these representations, statistically manipulated them to remove the heart failure prediction, and then decoded the result into a fake “healthy” version of the original x-ray.

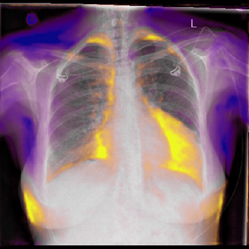

By superimposing the predicted change over the original x-ray, we create what we call a Generative Visual Rationale (GVR). The orange represents density that the model would remove and purple density that the model would add in order to remove the prediction of heart failure. Here’s an animated GIF (as promised) showing the model dynamically lowering its heart failure prediction and the associated GVR.

Seeing beyond the expected

However, heart failure was not all that the GVRs revealed. You’ll note above that the chest wall highlights purple and breast tissue orange. That's odd, right? But not when you consider that we used B-type natriuretic peptide blood levels (BNP) as our label for heart failure and that BNP has a known independent negative association with obesity and positive association with female gender 5,6. So the model was, in fact, using image features not associated with heart failure to improve its BNP predictions, and the GVRs conveyed this.

Side markers were another predictive factor that the GVRs exposed. The model would often add a conventional (non-digital) side marker when attempting to remove a heart failure prediction, probably because at our institution conventional side markers are primarily used in non-urgent settings where patients are more likely to be well with a low pre-test probability for heart failure. So the AI was using the external marker to help game its predictions. Look back at this first GIF to see this happen on the patient's right.

We also took normal chest x-rays and asked the model to give them heart failure (inverse GVRs). These confirmed again that cardiomegaly, pleural effusions and airspace opacity had been learned as signs of heart failure, but also that pacemakers had been learned - materializing as if from nowhere in another GIF!

You might ask - were we simply imposing our own preconceived notions on the GVRs? To test this, we compared GVRs from our well-trained model to a deliberately overfitted model that had seen the test data during training (a big deep learning no-no). Our hypothesis was that the overfitted model would perform extremely well on the test data (because of memorization) but that it would not produce very meaningful GVRs. Sure enough, blinded GVR assessment by a radiologist and radiology registrar confirmed this, with only 36% highlighting potential heart failure features compared to 80% from the well-trained model.

So, what does this mean for the future?

Well, arguably for the first time we now have a method for visualizing AI predictions in medical imaging that goes beyond identifying which image patches contribute to the final prediction. We have a technique that can reveal global image features in combination. From a safety perspective, this is a welcome advance, as it allows radiologists to confirm that individual predictions are reasonable, and to better detect AI faults, cheating, and biases.

The major current limitation to our method is GAN resolution, although it seems likely that this will be overcome 3. The architecture needed for GVRs is also different to commonly used neural networks and so this may further limit use, especially if the predictive power of GVR-friendly techniques is inferior.

Extrapolating further, it is conceivable that GVRs could soon be used to uncover imaging signs of disease previously unknown to humans. It's also conceivable that instead of visually predicting disease, the technique could be used to visually predict the future. “Hey AI, show me what you think this lesion/mass/bleed will look like tomorrow? Or next year?”. The amount of follow-up imaging performed on our patients is so large, and time is such an accessible and definite label, that training a radiology "pre-cognition" system is possibly not that far fetched.

| About The Authors: Dr. Andrew Dixon (last author, blog author) is a radiologist and Co-Director of Radiology Training at the Alfred Hospital in Melbourne. He is Academic Director for Radiopaedia. Dr. Jarrel Seah (first author) is a radiology registrar at the Alfred Hospital in Melbourne. Dr. Jennifer Tang (second author) is a radiology registrar at the Royal Melbourne Hospital. Andy Kitchen (third author) is a machine learning researcher and organizer of the Melbourne Machine Learning & AI Meetup. Associate Professor Frank Gaillard (fourth author) is a neuroradiologist and Director of Research in the University of Melbourne Department of Radiology and Royal Melbourne Hospital. He is Founder and Editor in Chief of Radiopaedia. |

References

1. Seah JCY, Tang JSN, Kitchen A, Gaillard F, Dixon AF. Chest Radiographs in Congestive Heart Failure: Visualizing Neural Network Learning. (2018) Radiology. doi:10.1148/radiol.2018180887 - Pubmed

2. Goodfellow, Ian J., Pouget-Abadie, Jean, Mirza, Mehdi, Xu, Bing, Warde-Farley, David, Ozair, Sherjil, Courville, Aaron, Bengio, Yoshua. Generative Adversarial Networks. (2014) arxiv.org/abs/1406.2661

3. Karras, Tero, Aila, Timo, Laine, Samuli, Lehtinen, Jaakko. Progressive Growing of GANs for Improved Quality, Stability, and Variation. (2017) arxiv.org/abs/1710.10196

4. Seah, Jarrel, Tang, Jennifer, Kitchen, Andy, Seah, Jonathan. Generative Visual Rationales. (2018) arxiv.org/abs/1804.04539

5. Clerico A, Giannoni A, Vittorini S, Emdin M. The paradox of low BNP levels in obesity. (2012) Heart failure reviews. 17 (1): 81-96. doi:10.1007/s10741-011-9249-z - Pubmed

6. Hsich EM, Grau-Sepulveda MV, Hernandez AF, Eapen ZJ, Xian Y, Schwamm LH, Bhatt DL, Fonarow GC. Relationship between sex, ejection fraction, and B-type natriuretic peptide levels in patients hospitalized with heart failure and associations with inhospital outcomes: findings from the Get With The Guideline-Heart Failure Registry. (2013) American heart journal. 166 (6): 1063-1071.e3. doi:10.1016/j.ahj.2013.08.029 - Pubmed

Unable to process the form. Check for errors and try again.

Unable to process the form. Check for errors and try again.